Step By Step: Upgrade 10gR2 RAC to

11gR2 RAC on Oracle Enterprise Linux 5 (32 bit) Platform.

By Bhavin Hingu

NOTE: This upgrade process

also applies for upgrading 11gR1 RAC to 11gR2 RAC.

This Document shows the step by step of upgrading

database from 10gR2 RAC to 11gR2 RAC. I have chosen the below upgrade path

which will allow me to upgrade 10gR2 Clusterware and ASM to 11gR2 Grid

infrastructure. In this upgrade Path, the original 10gR2 CRS installation was made

hidden as if there is no CRS existed. Then, the fresh installation of 11gR2

Grid Infrastructure was taken place and 10gR2 ASM diskgroups were moved and

mounted using the new 11gR2 Grid HOME. At this stage, the original 10gR2 RAC

database was managed by 11gR2 Grid HOME. At the end, the 11gR2 RAC database

home was installed and the original 10gR2 RAC database was upgraded to 11gR2

RAC manually.

|

|

Existing 10gR2 RAC setup (Before Upgrade) |

Target 11gR2 RAC Setup (After Upgrade) |

|

Clusterware |

Oracle 10gR2 Clusterware 10.2.0.1 |

Oracle 11gR2 Grid Infrastructure 11.2.0.1 |

|

ASM

Binaries |

10gR2 RAC 10.2.0.1 |

Oracle 11gR2 Grid Infrastructure 11.2.0.1 |

|

Cluster

Name |

lab |

lab |

|

Cluster

Nodes |

node1, node2, node3 |

node1, node2, node3 |

|

Clusterware

Home |

/u01/app/oracle/crs (CRS_HOME) |

/u01/app/grid11201 (GRID_HOME) |

|

Clusterware

Owner |

oracle:(oinstall, dba) |

oracle:(oinstall, dba) |

|

VIPs |

node1-vip, node2-vip, node3-vip |

node1-vip, node2-vip, node3-vip |

|

SCAN |

N/A |

lab-scan.hingu.net |

|

SCAN_LISTENER

Host/port |

N/A |

Scan VIPs Endpoint: (TCP:1525) |

|

OCR

and Voting Disks Storage Type |

Raw Devices |

ASM |

|

OCR

Disks |

/dev/raw/raw1, /dev/raw/raw2 |

+GIS_FILES |

|

/dev/raw/raw3, /dev/raw/raw4,

/dev/raw/raw5 |

+GIS_FILES |

|

|

ASM_HOME |

/u01/app/oracle/asm |

/u01/app/grid11201 |

|

ASM_HOME

Owner |

oracle:(oinstall, dba) |

oracle:(oinstall, dba) |

|

ASMLib

user:group |

oracle:oinstall |

oracle:oinstall |

|

ASM

LISTENER |

LISTENER (TCP:1521) |

LISTENER (TCP:1521) |

|

|

||

|

DB

Binaries |

Oracle 10gR2 RAC (10.2.0.1) |

Oracle 11gR2 RAC (11.2.0.1) |

|

DB_HOME |

/u01/app/oracle/db |

/u01/app/oracle/db11201 |

|

DB_HOME

Owner |

oracle:(oinstall, dba) |

oracle:(oinstall, dba) |

|

DB

LISTENER |

LAB_LISTENER |

LAB_LISTENER |

|

DB

Listener Host/port |

node1-vip, node2-vip, node3-vip

(port 1530) |

node1-vip, node2-vip, node3-vip

(port 1530) |

|

DB

Storage Type, File Management |

ASM with OMFs |

ASM with OMFs |

|

ASM

diskgroups for DB and FRA |

DATA, FRA |

DATA, FRA |

|

OS

Platform |

Oracle Enterprise Linux 5.5 (32 bit) |

Oracle Enterprise Linux 5.5 (32 bit) |

HERE’s an existing 10gR2 RAC Setup in detail

The Upgrade Process is composed of below

Stages:

·

Pre-Upgrade

Tasks

·

Hide

the 10gR2 Clusterware installation.

·

Install

11gR2 Grid Infrastructure.

·

Move

10gR2 ASM Diskgroups to 11gR2 Grid Infrastructure.

·

Register10gR2

RAC database and services to 11gR2 Grid Infrastructure.

·

Install

11gR2 RAC for database home.

·

Manually

upgrade original 10gR2 RAC database to 11gR2 RAC.

·

Install/Upgrade RPMs required for 11gR2 RAC Installation

·

Add SCAN VIPs to the DNS

·

Setup of Network Time Protocol

·

Start the nscd on all the RAC nodes

·

Create 3 ASM Disks for 11gR2 OCR and Voting Disks.

·

Backing up 10gR2 existing HOMEs and database

Minimum Required RPMs for 11gR2 RAC on OEL 5.5

(All the 3 RAC Nodes):

Below

command verifies whether the specified rpms are installed or not. Any missing

rpms can be installed from the OEL Media Pack

rpm -q binutils compat-libstdc++-33

elfutils-libelf elfutils-libelf-devel elfutils-libelf-devel-static \

gcc gcc-c++ glibc glibc-common glibc-devel glibc-headers kernel-headers

ksh libaio libaio-devel \

libgcc libgomp libstdc++ libstdc++-devel make numactl-devel sysstat

unixODBC unixODBC-devel

I had to

install below RPM.

numactl-devel à Located on the 3rd CD of OEL 5.5 Media pack.

[root@node1 ~]# rpm

-ivh numactl-devel-0.9.8-11.el5.i386.rpm

warning:

numactl-devel-0.9.8-11.el5.i386.rpm: Header V3 DSA signature: NOKEY, key ID

1e5e0159

Preparing...

########################################### [100%]

1:numactl-devel

########################################### [100%]

[root@node1 ~]#

I had to

upgrade the cvuqdisk

RPM by removing and installing the same with

higher version. This step is also taken care by rootupgrade.sh script.

cvuqdisk à Available on Grid Infrastructure Media (under rpm folder)

rpm -e cvuqdisk

export

CVUQDISK_GRP=oinstall

echo $CVUQDISK_GRP

rpm -ivh

cvuqdisk-1.0.7-1.rpm

SCAN VIPS

to configure in DNS which resolves to lab-scan.hingu.net:

192.168.2.151

192.168.2.152

192.168.2.153

HERE is the existing DNS setup. In that

setup, the below two files were modified with the entry in RED to add these

SCAN VIPs into the DNS.

/var/named/chroot/var/named/hingu.net.zone

/var/named/chroot/var/named/2.168.192.in-addr.arpa.zone

/var/named/chroot/var/named/hingu.net.zone

$TTL

1d

hingu.net.

IN SOA lab-dns.hingu.net. root.hingu.net. (

100 ; se = serial number

8h ; ref = refresh

5m ; ret = update retry

3w ; ex = expiry

3h ; min = minimum

)

IN NS lab-dns.hingu.net.

; DNS server

lab-dns

IN A 192.168.2.200

; RAC Nodes Public name

node1

IN A 192.168.2.1

node2

IN A 192.168.2.2

node3

IN A 192.168.2.3

; RAC Nodes Public VIPs

node1-vip

IN A 192.168.2.51

node2-vip

IN A 192.168.2.52

node3-vip

IN A 192.168.2.53

;

3 SCAN VIPs

lab-scan IN

A 192.168.2.151

lab-scan IN

A 192.168.2.152

lab-scan IN A

192.168.2.153

; Storage Network

nas-server IN

A 192.168.1.101

node1-nas

IN A 192.168.1.1

node2-nas

IN A 192.168.1.2

node3-nas

IN A 192.168.1.3

/var/named/chroot/var/named/2.168.192.in-addr.arpa.zone

$TTL

1d

@ IN SOA

lab-dns.hingu.net. root.hingu.net. (

100 ; se = serial number

8h ; ref = refresh

5m ; ret = update retry

3w ; ex = expiry

3h ; min = minimum

)

IN NS lab-dns.hingu.net.

; DNS machine name in reverse

200

IN PTR lab-dns.hingu.net.

; RAC Nodes Public Name in Reverse

1

IN PTR node1.hingu.net.

2

IN PTR node2.hingu.net.

3

IN PTR node3.hingu.net.

; RAC Nodes Public VIPs in Reverse

51

IN PTR node1-vip.hingu.net.

52

IN PTR node2-vip.hingu.net.

53

IN PTR node3-vip.hingu.net.

;

RAC Nodes SCAN VIPs in Reverse

151 IN

PTR lab-scan.hingu.net.

152 IN

PTR lab-scan.hingu.net.

153 IN

PTR lab-scan.hingu.net.

Restart

the DNS Service (named):

service named restart

NOTE:

nslookup for lab-scan should return names in random order every time.

Network

Time Protocol Setting (On all the RAC Nodes):

Oracle Time Synchronization Service is chosen to be used

over the Linux system provided ntpd. So, ntpd needs to be

deactivated and deinstalled to avoid any possibility of it being conflicted

with the Oracle’s Cluster Time Sync Service (ctss).

# /sbin/service ntpd

stop

# chkconfig ntpd off

# mv /etc/ntp.conf

/etc/ntp.conf.org

Also remove the

following file:

/var/run/ntpd.pid

Network

Service Cache Daemon (all the RAC nodes)

The Network Service Cache Daemon was started on all the RAC nodes.

Service nscd start

Create

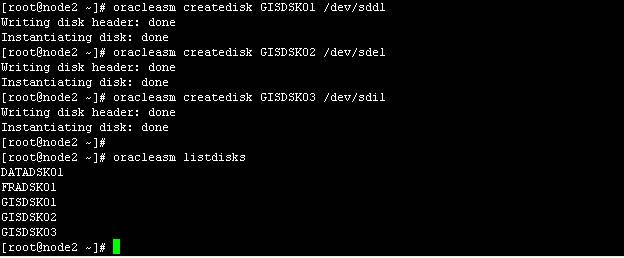

ASM Disks for diskgroup GIS_FILES to store 11gR2 OCR and Voting Disks

On the rest of the Nodes:

oracleasm scandisks

Backing Up ORACLE_HOMEs/database:

Steps I followed to take the Backup of ORACLE_HOMEs before

the upgrade:

On node1:

mkdir backup

cd backup

dd if=/dev/dev/raw1 of=ocr_disk_10gr2.bkp

dd if=/dev/dev/raw3 of=voting_disk_10gr2.bkp

tar cvf node1_crs_10gr2.tar

/u01/app/oracle/crs/*

tar cvf node1_asm_10gr2.tar

/u01/app/oracle/asm/*

tar cvf node1_db_10gr2.tar /u01/app/oracle/db/*

tar cvf node1_etc_oracle /etc/oracle/*

cp /etc/inittab etc_inittab

mkdir etc_init_d

cd etc_init_d

cp /etc/init.d/init* .

On node2:

mkdir backup

cd backup

tar cvf node2_crs_10gr2.tar

/u01/app/oracle/crs/*

tar cvf node2_asm_10gr2.tar

/u01/app/oracle/asm/*

tar cvf node2_db_10gr2.tar /u01/app/oracle/db/*

tar cvf node2_etc_oracle /etc/oracle/*

cp /etc/inittab etc_inittab

mkdir etc_init_d

cd etc_init_d

cp /etc/init.d/init* .

On node3:

mkdir backup

cd backup

tar cvf node3_crs_10gr2.tar

/u01/app/oracle/crs/*

tar cvf node3_asm_10gr2.tar

/u01/app/oracle/asm/*

tar cvf node3_db_10gr2.tar /u01/app/oracle/db/*

tar cvf node3_etc_oracle /etc/oracle/*

cp /etc/inittab etc_inittab

mkdir etc_init_d

cd etc_init_d

cp /etc/init.d/init* .

RMAN full

database backup was taken.

Hide the 10gR2 CRS:

In order to install 11gR2 GI, it is required to hide the

existing 10gR2 CRS so that 11gR2 GI is installed without any conflict with

10gR2 CRS.

Shutdown CRS on all the RAC nodes

crsctl stop crs

renamed these files/Directories

mv /etc/oracle /etc/oracle_bkp

mkdir /etc/init.d/bkp

mv /etc/init.d/init* /etc/init.d/bkp

removed below lines from the /etc/inittab (the

inittab was already backed up in Pre-Upgrade tasks as well)

h1:35:respawn:/etc/init.d/init.evmd run

>/dev/null 2>&1 </dev/null

h2:35:respawn:/etc/init.d/init.cssd fatal

>/dev/null 2>&1 </dev/null

h3:35:respawn:/etc/init.d/init.crsd run

>/dev/null 2>&1 </dev/null

removed the network socket files:

rm -rf /tmp/.oracle

rm -rf /var/tmp/.oracle

rebooted all the RAC nodes at this stage

reboot

Install 11gR2 Grid Infrastructure:

Grid

Infrastructure installation process:

Installation

Option:

Install and Configure Grid Infrastructure for

a Cluster

Installation

Type:

Advanced

Installation

Product

Language:

English

Grid Plug

and Play:

Cluster

Name: lab

SCAN Name:

lab-scan.hingu.net

SCAN Port:

1525

Configure

GNS: Unchecked

Cluster Node Information:

Entered

the Hostname and VIP names of the Cluster Nodes.

Checked

the SSH connectivity

Network

Interface Usage:

OUI picked

up all the interfaces correctly. I did not have to make any changes here.

Storage Option:

Automatic

Storage Management (ASM)

Create ASM

Disk Group:

Disk Group

Name: GIS_FILES

Redundancy:

Normal

Candidate

Disks: ORCL:GISDSK01, ORCL:GISDSK02, ORCL:GISDSK03

ASM

Password:

Use Same

Password for these accounts. (Ignored password warnings.)

Failure

Isolation:

Do not use

Intelligent Platform Management Interface (IPMI)

Operating

System Groups:

ASM

Database Administrator (OSDBA) Group: dba

ASM

Instance Administrator Operator (OSOPER) Group: oinstall

ASM

Instance Administrator (OSASM) Group: oinstall

Installation

Location:

Oracle

Base: /u01/app/oracle

Software

Location: /u01/app/grid11201

Create

Inventory:

Inventory

Directory: /u01/app/oraInventory

Prerequisite

Checks:

No

issue/Errors

Summary

Screen:

Verified the

information here and pressed “Finish” to start installation.

At the End

of the installation, the root.sh script needed to be executed as root user.

/u01/app/grid11201/root.sh

After the

successful completion of this script, the 11g R2 High Availability Service

(CRS, CSS and EVMD) started up and running.

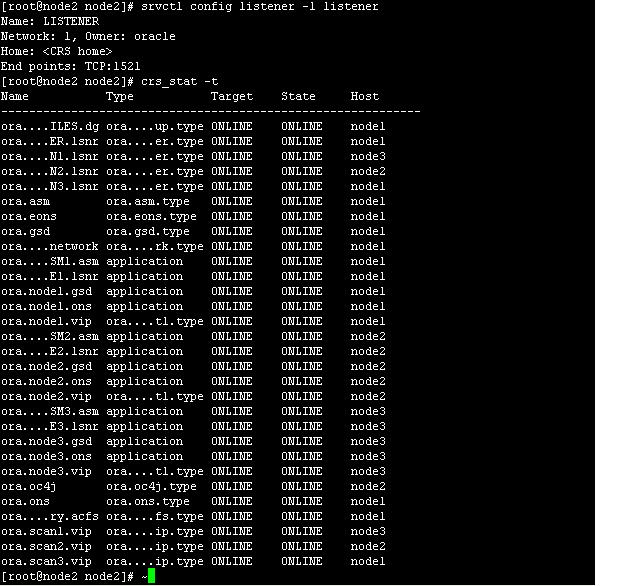

Verified

that the status of the installation using below set of commands.

crsctl check cluster –all

crs_stat –t –v

crsctl check ctss

The GSD and

OC4J resources are by default disabled. Enabled GSD them as below.

srvctl enable nodeapps –g

srvctl start nodeapps –n node1

srvctl start nodeapps –n node2

srvctl start nodeapps –n node3

srvctl enable oc4j

srvctl start oc4j

Invoked

netca from 11gR2 Grid Infrastructure Home to reconfigure the listener “LISTENER”

/u01/app/oracle/grid11201/bin/netca

HERE’s the

detailed Screen Shots of Installing 11gR2 Grid Infrastructure

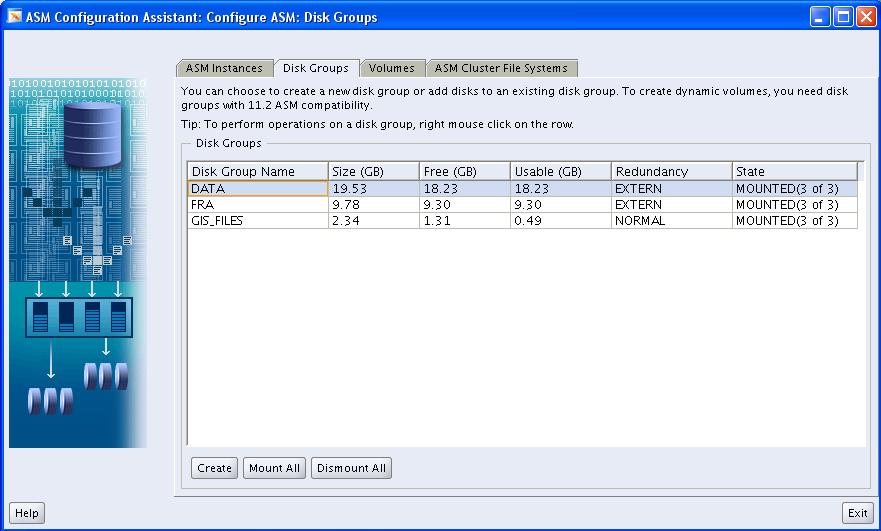

Move 10gR2 ASM Diskgroups to 11gR2 Grid

Infrastructure:

·

Invoked the asmca from the 11gR2 Grid Infrastructure HOME (/u01/app/grid11201).

·

Mount the FRA and DATA diskgroup using ASMCA. This

way, asmca registered and moved the DATA and FRA diskgroups to the 11gR2 GI.

/u01/app/grid11201/bin/asmca

HERE’s

the detailed Screen Shots of Migrating 10gR2 ASM Disk Groups to 11gR2 Grid

Infrastructure

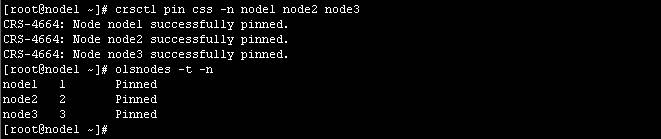

Start the 10gR2 RAC Database using SQLPLUS.

While

Starting the 10gR2 RAC database, the below error was received. This is because,

as per Oracle, 11gR2 has dynamic configuration of cluster and 11gR1 or older

releases have static configuration. So,

in order to run older version databases in 11gR2, the cluster configuration

needs to be made persistent by pinning the nodes.

[oracle@node1 ~]$ which sqlplus

/u01/app/oracle/db/bin/sqlplus

[oracle@node1 ~]$ sqlplus / as

sysdba

SQL*Plus: Release 10.2.0.3.0 -

Production on Thu Oct 20 01:39:58 2011

Copyright (c) 1982, 2006,

Oracle. All Rights Reserved.

Connected to an idle instance.

SQL> startup

ORA-01078: failure in processing

system parameters

ORA-01565: error in identifying

file '+DATA/labdb/spfilelabdb.ora'

ORA-17503: ksfdopn:2 Failed to

open file +DATA/labdb/spfilelabdb.ora

ORA-15077: could not locate ASM

instance serving a required diskgroup

ORA-29701: unable to connect to

Cluster Manager

SQL>

/u01/app/grid11201/bin/crsctl pin css –n node1 node2 node3

The labdb

database was then started using sqlplus from 10gR2 home after pinning the node.

Then I had registered labdb to 11gR2 Grid using the 10gR2 srvctl (/u01/app/oracle/db/bin/srvctl

and ORACLE_HOME set to 10gR2) . The srvctl to

manage 10gR2 RAC database needs to be invoked from 10gR2 home itself until it

is upgraded to 11R2 RAC.

Upgrade 10gR2 RAC Database to

11gR2 RAC:

·

Install the 11gR2

RAC database software

·

Move the Listener

LAB_LISTENER to 11gR2 RAC Home.

·

Start Manual Process

to upgrade the original labdb database from 10gR2 RAC to 11gR2 RAC.

Start the runInstaller from 11gR2 Real

Application Cluster (RAC) Software Location:

/home/oracle/db11201/database/runInstaller

Real Application Cluster installation process:

Configure

Security Updates:

Email: bhavin@oracledba.org

Ignore the

“Connection Failed” alert.

Installation

Option:

Install

database software only

Node

Selection:

Select All

the Nodes (node1,node2

and node3)

Product

Language:

English

Database

Edition:

Enterprise

Edition

Installation

Location:

Oracle

Base: /u01/app/oracle

Software

Location: /u01/app/oracle/db11201

Operating

System Groups:

Database

Administrator (OSDBA) Group: dba

Database

Operator (OSOPER) Group: oinstall

Summary

Screen:

Verified

the information here and pressed “Finish” to start installation.

At the End

of the installation, the below scripts needs to be executed on all the nodes as

root user.

/u01/app/oracle/db11201/root.sh

Move the

Listener “LAB_LISTENER” from 10gR2 RAC DB Home to 11gR2 RAC database Home:

Invoked the

netca from 11gR2 RAC home (/u01/app/oracle/db11201) and added the listener

LAB_LISTENER (port TCP:1530) to newly installed 11gR2 RAC home.

Copy the tnsnames.ora

files from 10gR2 DB home to 11gR2 RAC home on all the nodes.

ssh node1 cp /u01/app/oracle/db/network/admin/tnsnames.ora

/u01/app/oracle/db11201/network/admin/tnsnames.ora

ssh node2 cp /u01/app/oracle/db/network/admin/tnsnames.ora /u01/app/oracle/db11201/network/admin/tnsnames.ora

ssh node3 cp /u01/app/oracle/db/network/admin/tnsnames.ora

/u01/app/oracle/db11201/network/admin/tnsnames.ora

Upgrade the Database labdb Manually:

This Metalink Note (ID 837570.1) was followed for manually upgrading database from

10gR2 RAC to 11gR2 RAC.

·

Ran the

/u01/app/oracle/db11201/rdbms/admin/utlu112i.sql

·

Fixed the warning returned from above script

regarding absolute parameter

·

Ignored the warnings regarding TIMEZONE as no action

is needed for timezone file version if DB is 10.2.0.2 or higher.

·

Ignored the warning regarding stale statistics and

EM.

·

Purged the recycle bin using PURGE DBA_RECYCLEBIN

·

Copied the instance specific init files and password

files from 10gR2 HOME to 11gR2 RAC Home.

·

Set the cluster_database to false

·

Stopped the database labdb. S

·

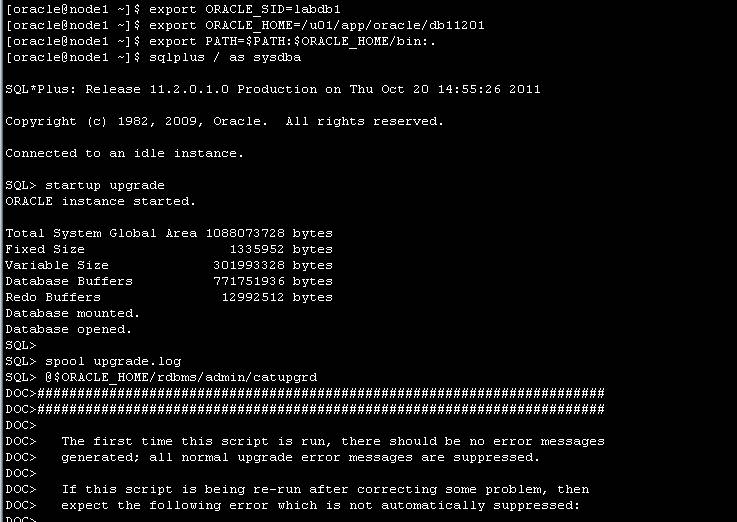

Set the 11gR2 RAC HOME

·

Updated the /etc/oratab file and modify the labdb HOME to 11gR2 HOME

·

Started the instance labdb1 on node1 using UPGRADE

option of start command.

·

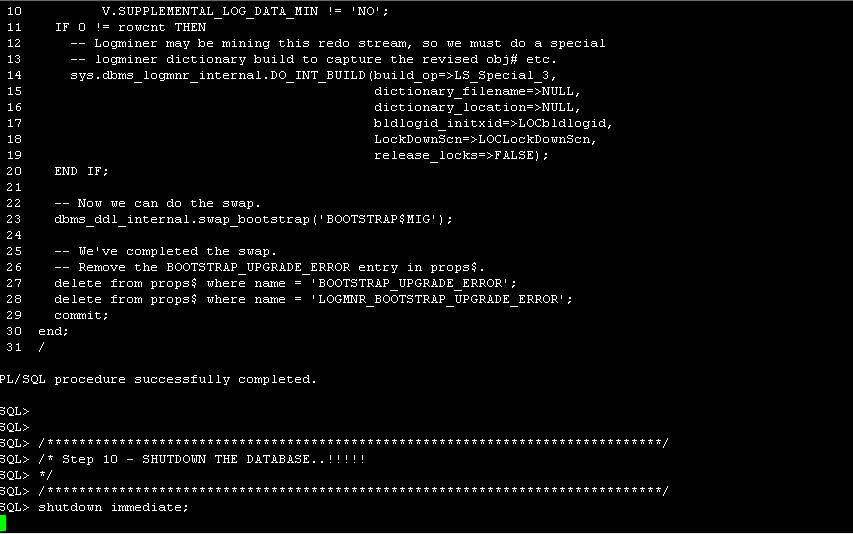

Ran the catupgrd.sql from $ORACLE_HOME/rdbms/admin

of 11gR2.

·

Started the database instance on node1.

·

Updated the cluster_database to true in spfile

·

Re-started the database instance on all the nodes.

Post

Upgrade Steps:

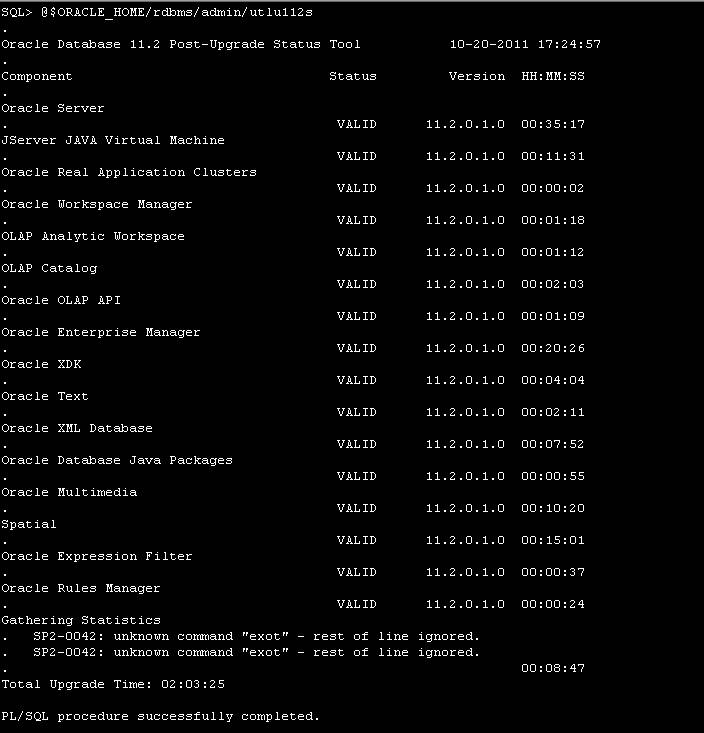

·

Ran the utlu112s.sql to get the status of the

upgrade of each component.

·

Ran the catuppst.sql to perform upgrade actions that

do not require database in UPGRADE mode.

·

Ran the utlrp.sql to recompile any INVALID objects

·

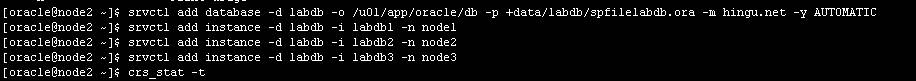

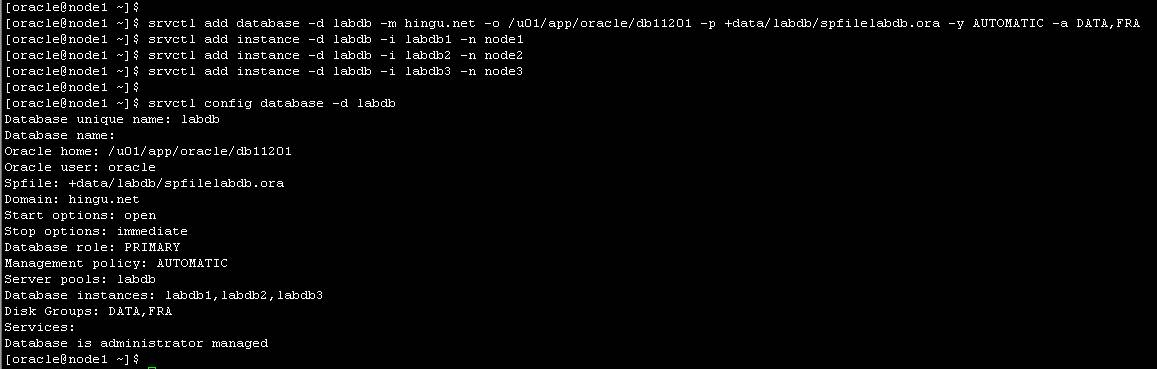

Upgraded the database configuration from 10gR2 to

11gR2 into the OCR by removing the labdb database and adding it back

·

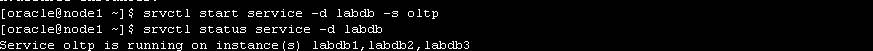

Added the service oltp to the 11gR2 RAC database.

![]()

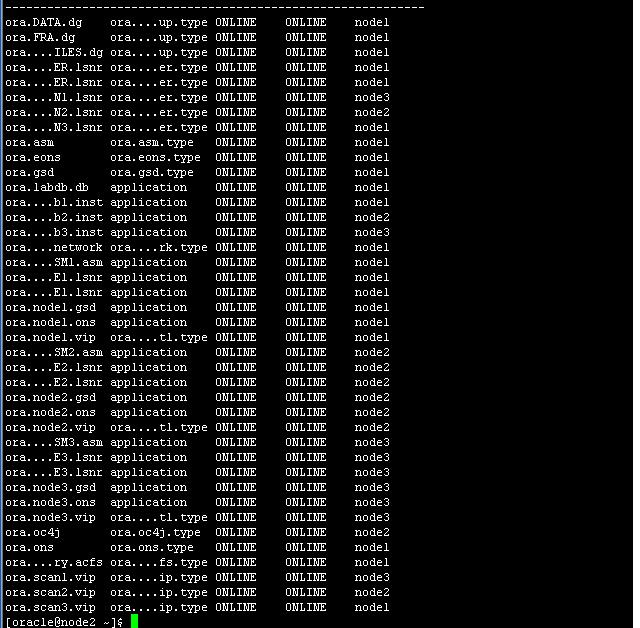

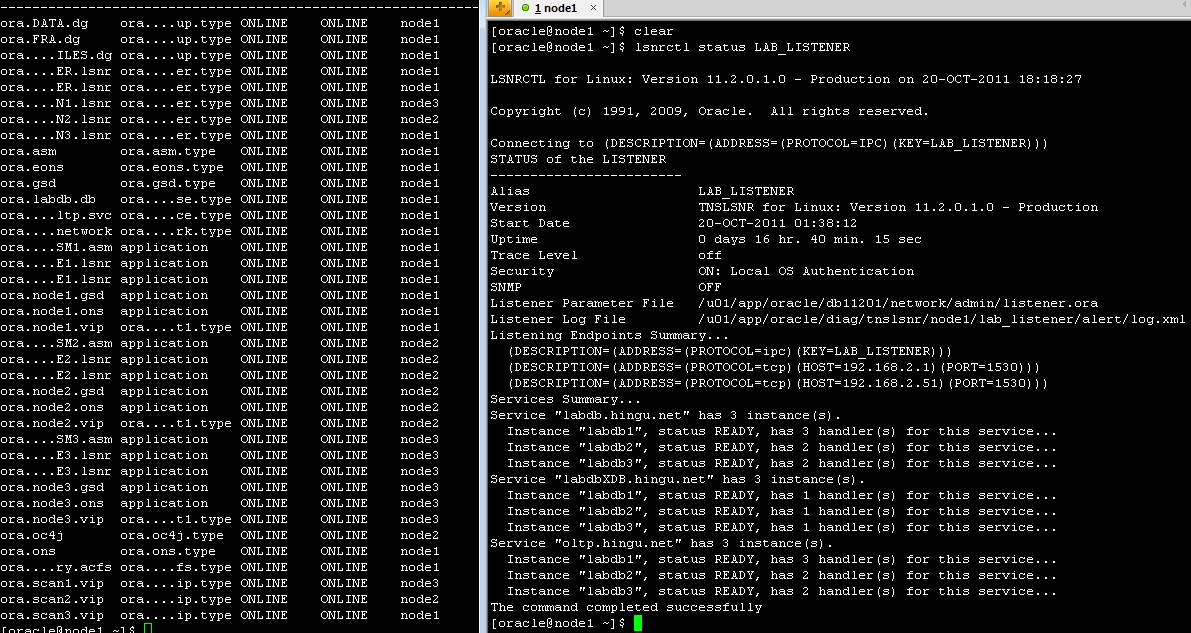

Final CRS Stack after the upgrade:

Rebooted

all the 3 RAC nodes and verified that all the resources come up without any

issues/errors.

reboot